RePaint

RePaint

Official PyTorch Code and Models of "RePaint: Inpainting using Denoising Diffusion Probabilistic Models", CVPR 2022

Top Related Projects

High-Resolution Image Synthesis with Latent Diffusion Models

PyTorch package for the discrete VAE used for DALL·E.

Implementation of DALL-E 2, OpenAI's updated text-to-image synthesis neural network, in Pytorch

High-Resolution Image Synthesis with Latent Diffusion Models

Quick Overview

RePaint is an open-source project that implements a novel inpainting algorithm using diffusion models. It aims to provide high-quality image inpainting results by leveraging the power of diffusion models, which have shown impressive capabilities in image generation tasks.

Pros

- Produces high-quality inpainting results, especially for complex scenes and textures

- Utilizes state-of-the-art diffusion models for image generation

- Supports various mask shapes and sizes for flexible inpainting tasks

- Open-source implementation allows for further research and improvements

Cons

- Requires significant computational resources, especially for high-resolution images

- May have longer processing times compared to some traditional inpainting methods

- Depends on pre-trained diffusion models, which may limit its adaptability to specific domains

- Potential learning curve for users unfamiliar with diffusion models and deep learning frameworks

Code Examples

# Load an image and mask

image = load_image("input_image.png")

mask = load_mask("input_mask.png")

# Initialize the RePaint model

model = RePaintModel.from_pretrained("repaint-diffusion-v1")

# Perform inpainting

inpainted_image = model.inpaint(image, mask)

# Save the result

save_image(inpainted_image, "output_image.png")

# Customize inpainting parameters

model = RePaintModel.from_pretrained("repaint-diffusion-v1")

inpainted_image = model.inpaint(

image,

mask,

num_iterations=1000,

guidance_scale=7.5,

seed=42

)

# Batch processing multiple images

image_paths = ["image1.png", "image2.png", "image3.png"]

mask_paths = ["mask1.png", "mask2.png", "mask3.png"]

for img_path, mask_path in zip(image_paths, mask_paths):

image = load_image(img_path)

mask = load_mask(mask_path)

inpainted = model.inpaint(image, mask)

save_image(inpainted, f"inpainted_{img_path}")

Getting Started

To get started with RePaint:

-

Clone the repository:

git clone https://github.com/andreas128/RePaint.git cd RePaint -

Install dependencies:

pip install -r requirements.txt -

Download pre-trained models:

python download_models.py -

Run the inpainting script:

python inpaint.py --input_image path/to/image.png --input_mask path/to/mask.png --output_image output.png

For more detailed instructions and advanced usage, refer to the project's README and documentation.

Competitor Comparisons

High-Resolution Image Synthesis with Latent Diffusion Models

Pros of stablediffusion

- More versatile, capable of generating a wide range of images from text prompts

- Larger community and more active development, with frequent updates and improvements

- Supports various fine-tuning and customization options

Cons of stablediffusion

- Requires more computational resources and longer processing times

- May produce less accurate results for specific inpainting tasks compared to RePaint

Code Comparison

RePaint:

def inpaint(masked_image, mask, prompt):

return repaint_model(masked_image, mask, prompt)

stablediffusion:

def generate_image(prompt, guidance_scale=7.5, num_inference_steps=50):

return pipeline(prompt, guidance_scale=guidance_scale, num_inference_steps=num_inference_steps)

Summary

While RePaint focuses specifically on inpainting tasks, stablediffusion offers a more comprehensive image generation solution. RePaint may provide better results for targeted inpainting, but stablediffusion's versatility and active development make it a more popular choice for general image generation tasks. The code examples highlight the different approaches, with RePaint designed for inpainting and stablediffusion for text-to-image generation.

PyTorch package for the discrete VAE used for DALL·E.

Pros of DALL-E

- More versatile, capable of generating a wide range of images from text prompts

- Produces higher quality, more coherent images overall

- Backed by OpenAI, with potential for continued improvements and updates

Cons of DALL-E

- Closed-source, limiting accessibility and customization options

- Requires significant computational resources to run and train

Code Comparison

While a direct code comparison is not possible due to DALL-E being closed-source, we can compare the usage of RePaint with a hypothetical DALL-E API:

RePaint:

from repaint import RePaint

model = RePaint()

image = model.inpaint(masked_image, mask, prompt)

Hypothetical DALL-E API:

import dalle_api

image = dalle_api.generate_image(prompt)

Key Differences

- RePaint focuses on image inpainting, while DALL-E is designed for general image generation from text prompts

- RePaint is open-source and can be run locally, while DALL-E is a closed system

- DALL-E typically produces higher quality results but requires more resources and is less accessible

Implementation of DALL-E 2, OpenAI's updated text-to-image synthesis neural network, in Pytorch

Pros of DALLE2-pytorch

- Implements the more advanced DALL-E 2 model, potentially offering higher-quality image generation

- Provides a PyTorch implementation, which may be more familiar to many researchers and developers

- Offers more flexibility and customization options for training and inference

Cons of DALLE2-pytorch

- May require more computational resources due to the complexity of the DALL-E 2 model

- Could be more challenging to set up and use for beginners compared to RePaint

- Might have less stable or consistent results, as it's an unofficial implementation of a complex model

Code Comparison

RePaint:

model = RePaint(

dim = 64,

dim_mults = (1, 2, 4, 8)

)

DALLE2-pytorch:

dalle2 = DALLE2(

dim = 128,

num_tokens = 16384,

depth = 12,

heads = 16

)

Both repositories provide implementations of image generation models, but they focus on different approaches. RePaint is centered around inpainting and restoration, while DALLE2-pytorch aims to replicate the text-to-image capabilities of DALL-E 2. The code snippets show the initialization of the main models in each repository, highlighting the different parameters and architectures used.

High-Resolution Image Synthesis with Latent Diffusion Models

Pros of latent-diffusion

- More versatile, capable of generating diverse types of images

- Faster inference time due to working in compressed latent space

- Supports text-to-image generation and other conditional inputs

Cons of latent-diffusion

- May produce less precise results for specific inpainting tasks

- Requires more computational resources for training

Code comparison

RePaint:

model = RePaint(

img_size=256,

timesteps=1000,

sampling_timesteps=250,

loss_type='l1'

)

latent-diffusion:

model = LatentDiffusion(

linear_start=0.00085,

linear_end=0.0120,

num_timesteps=1000,

latent_channels=4,

channels=128

)

Key differences

- RePaint focuses specifically on image inpainting tasks

- latent-diffusion is a more general-purpose image generation model

- RePaint uses a pixel-space diffusion process, while latent-diffusion operates in a compressed latent space

- latent-diffusion supports a wider range of conditioning inputs, including text prompts

Both projects are based on diffusion models but serve different primary purposes and have distinct architectural approaches.

Convert  designs to code with AI

designs to code with AI

Introducing Visual Copilot: A new AI model to turn Figma designs to high quality code using your components.

Try Visual CopilotREADME

RePaint

Inpainting using Denoising Diffusion Probabilistic Models

CVPR 2022 [Paper]

Setup

1. Code

git clone https://github.com/andreas128/RePaint.git

2. Environment

pip install numpy torch blobfile tqdm pyYaml pillow # e.g. torch 1.7.1+cu110.

3. Download models and data

pip install --upgrade gdown && bash ./download.sh

That downloads the models for ImageNet, CelebA-HQ, and Places2, as well as the face example and example masks.

4. Run example

python test.py --conf_path confs/face_example.yml

Find the output in ./log/face_example/inpainted

Note: After refactoring the code, we did not reevaluate all experiments.

RePaint fills a missing image part using diffusion models

|

|

What are the blue parts?

Those parts are missing and therefore have to be filled by RePaint.

RePaint generates the missing parts inspired by the known parts.

How does it work?

RePaint starts from pure noise. Then the image is denoised step-by-step.

It uses the known part to fill the unknown part in each step.

Why does the noise level fluctuate during generation?

Our noise schedule improves the harmony between the generated and

the known part [4.2 Resampling].

Details on data

Which datasets and masks have a ready-to-use config file?

We provide config files for ImageNet (inet256), CelebA-HQ (c256) and Places2 (p256) for the masks "thin", "thick", "every second line", "super-resolution", "expand" and "half" in ./confs. You can use them as shown in the example above.

How to prepare the test data?

We use LaMa for validation and testing. Follow their instructions and add the images as specified in the config files. When you download the data using download.sh, you can see examples of masks we used.

How to apply it to other images?

Copy the config file for the dataset that matches your data best (for faces aligned like CelebA-HQ _c256, for diverse images _inet256). Then set the gt_path and mask_path to where your input is. The masks have the value 255 for known regions and 0 for unknown areas (the ones that get generated).

How to apply it for other datasets?

If you work with other data than faces, places or general images, train a model using the guided-diffusion repository. Note that RePaint is an inference scheme. We do not train or finetune the diffusion model but condition pre-trained models.

Adapt the code

How to design a new schedule?

Fill in your own parameters in this line to visualize the schedule using python guided_diffusion/scheduler.py. Then copy a config file, set your parameters in these lines and run the inference using python test.py --conf_path confs/my_schedule.yml.

How to speed up the inference?

The following settings are in the schedule_jump_params key in the config files. You can visualize them as described above.

- Reduce

t_T, the total number of steps (without resampling). The lower it is, the more noise gets removed per step. - Reduce

jump_n_sampleto resample fewer times. - Apply resampling not from the beginning but only after a specific time by setting

start_resampling.

Code overview

- Schedule: The list of diffusion times t which will be traversed are obtained in this line. e.g. times = [249, 248, 249, 248, 247, 248, 247, 248, 247, 246, ...]

- Denoise: Reverse diffusion steps from xt (more noise) to a xt-1 (less noisy) are done below this line.

- Predict: The model is called here and obtains xt and the time t to predict a tensor with 6 channels containing information about the mean and variance of xt-1. Then the value range of the variance is adjusted here. The mean of xt-1 is obtained by the weighted sum of the estimated x0 and xt here. The obtained mean and variance is used here to sample xt-1. (This is the original reverse step from guided-diffusion. )

- Condition: The known part of the input image needs to have the same amount of noise as the part that the diffusion model generates to join them. The required amount of noise is calculated here and added to the known part here. The generated and sampled parts get joined using a maks here.

- Undo: The forward diffusion steps from xt-1 to xt is done after this line. The noise gets added to xt-1 here.

Issues

Do you have further questions?

Please open an issue, and we will try to help you.

Did you find a mistake?

Please create a pull request. For examply by clicking the pencil button on the top right on the github page.

RePaint on diverse content and shapes of missing regions

The blue region is unknown and filled by RePaint:

Note: RePaint creates many meaningful fillings.

- Face: Expressions and features like an earring or a mole.

- Computer: The computer screen shows different images, text, and even a logo.

- Greens: RePaint makes sense of the tiny known part and incorporates it in a beetle, spaghetti, and plants.

- Garden: From simple filling like a curtain to complex filling like a human.

Extreme Case 1: Generate every second line

- Every Second line of the input image is unknown.

- Most inpainting methods fail on such masks.

Extreme Case 2: Upscale an image

- The inpainting only knows pixels with a stridden access of 2.

- A ratio of 3/4 of the image has to be filled.

- This is equivalent to Super-Resolution with the Nearest Neighbor kernel.

RePaint conditions the diffusion model on the known part

- RePaint uses unconditionally trained Denoising Diffusion Probabilistic Models.

- We condition during inference on the given image content.

Intuition of one conditioned denoising step:

- Sample the known part: Add gaussian noise to the known regions of the image.

We obtain a noisy image that follows the denoising process exactly. - Denoise one step: Denoise the previous image for one step. This generates

content for the unknown region conditioned on the known region. - Join: Merge the images from both steps.

Details are in Algorithm 1 on Page 5. [Paper]

How to harmonize the generated with the known part?

- Fail: When using only the algorithm above, the filling is not well harmonized with the known part (n=1).

- Fix: When applying the [4.2 Resampling] technique, the images are better harmonized (n>1).

RePaint Fails

- The ImageNet model is biased towards inpainting dogs.

- This is due to the high ratio of dog images in ImageNet.

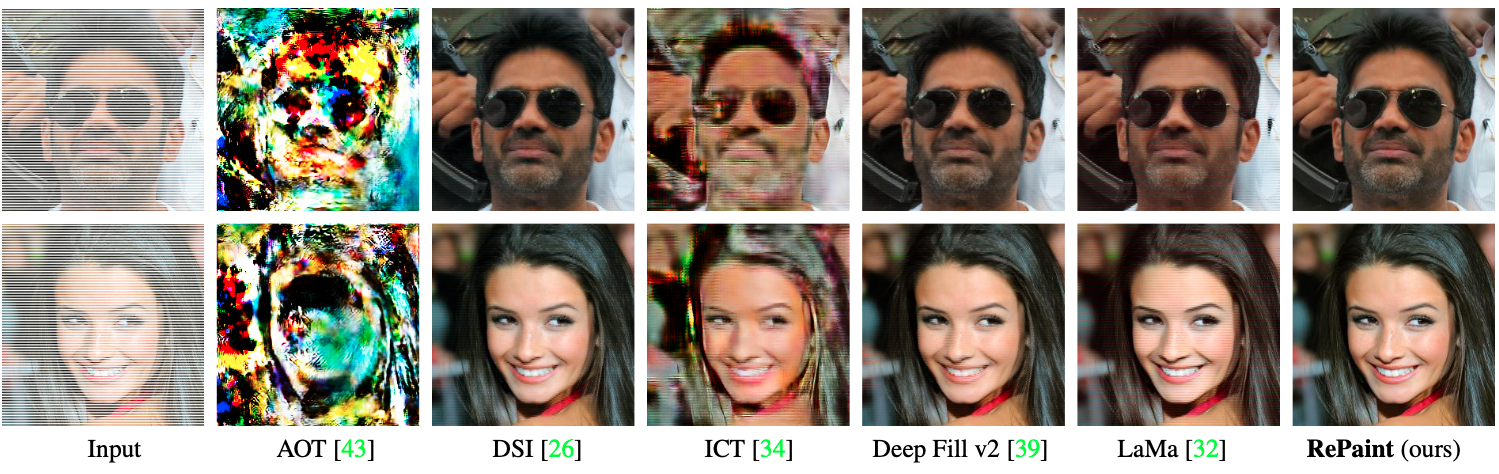

User Study State-of-the-Art Comparison

- Outperforms autoregression-based and GAN-based SOTA methods,

with 95% significance for all masks except for two inconclusive cases. - The user study was done for six different masks on three datasets.

- RePaint outperformed SOTA methods in 42 of 44 cases. [Paper]

Explore the Visual Examples

- Datasets: CelebA-HQ, ImageNet, Places2

- Masks: Random strokes, half image, huge, sparse

- Explore more examples like this in the [Appendix].

Acknowledgement

This work was supported by the ETH Zürich Fund (OK), a Huawei Technologies Oy (Finland) project, and an Nvidia GPU grant.

This repository is based on guided-diffuion from OpenAI.

Top Related Projects

High-Resolution Image Synthesis with Latent Diffusion Models

PyTorch package for the discrete VAE used for DALL·E.

Implementation of DALL-E 2, OpenAI's updated text-to-image synthesis neural network, in Pytorch

High-Resolution Image Synthesis with Latent Diffusion Models

Convert  designs to code with AI

designs to code with AI

Introducing Visual Copilot: A new AI model to turn Figma designs to high quality code using your components.

Try Visual Copilot