Top Related Projects

Port of OpenAI's Whisper model in C/C++

WhisperX: Automatic Speech Recognition with Word-level Timestamps (& Diarization)

Faster Whisper transcription with CTranslate2

High-performance GPGPU inference of OpenAI's Whisper automatic speech recognition (ASR) model

Quick Overview

Whisper is an automatic speech recognition (ASR) system developed by OpenAI. It's designed to convert audio into text, supporting multiple languages and tasks such as transcription, translation, and language identification. Whisper is known for its robustness and versatility across various audio conditions.

Pros

- Multilingual support for over 90 languages

- Capable of performing transcription, translation, and language identification

- Open-source and freely available for use and modification

- Robust performance across different audio qualities and accents

Cons

- Requires significant computational resources, especially for larger models

- May struggle with highly technical or domain-specific terminology

- Not optimized for real-time transcription

- Accuracy can vary depending on the chosen model size and audio quality

Code Examples

- Transcribing an audio file:

import whisper

model = whisper.load_model("base")

result = model.transcribe("audio.mp3")

print(result["text"])

- Translating speech to English:

import whisper

model = whisper.load_model("base")

result = model.transcribe("non_english_audio.mp3", task="translate")

print(result["text"])

- Detecting the language of an audio file:

import whisper

model = whisper.load_model("base")

audio = whisper.load_audio("unknown_language.mp3")

audio = whisper.pad_or_trim(audio)

mel = whisper.log_mel_spectrogram(audio).to(model.device)

_, probs = model.detect_language(mel)

detected_lang = max(probs, key=probs.get)

print(f"Detected language: {detected_lang}")

Getting Started

To get started with Whisper, follow these steps:

-

Install Whisper using pip:

pip install -U openai-whisper -

Install FFmpeg (required for audio processing):

- On macOS:

brew install ffmpeg - On Windows: Download from https://ffmpeg.org/download.html

- On Ubuntu or Debian:

sudo apt update && sudo apt install ffmpeg

- On macOS:

-

Use Whisper in your Python script:

import whisper model = whisper.load_model("base") result = model.transcribe("path/to/your/audio/file.mp3") print(result["text"])

This will transcribe the specified audio file using the base model. You can choose different model sizes ("tiny", "base", "small", "medium", "large") based on your needs and available computational resources.

Competitor Comparisons

Port of OpenAI's Whisper model in C/C++

Pros of whisper.cpp

- Lightweight and efficient C++ implementation, requiring less computational resources

- Runs locally without the need for an internet connection or API calls

- Supports various platforms, including mobile devices and embedded systems

Cons of whisper.cpp

- May have slightly lower accuracy compared to the original Python implementation

- Limited to the features available in the C++ port, potentially lacking some advanced functionalities

- Requires manual compilation and setup, which can be more complex for non-technical users

Code Comparison

whisper (Python):

import whisper

model = whisper.load_model("base")

result = model.transcribe("audio.mp3")

print(result["text"])

whisper.cpp (C++):

#include "whisper.h"

whisper_context * ctx = whisper_init_from_file("ggml-base.bin");

whisper_full_params params = whisper_full_default_params(WHISPER_SAMPLING_GREEDY);

whisper_full(ctx, params, "audio.wav", nullptr, nullptr);

whisper_print_timings(ctx);

whisper_free(ctx);

WhisperX: Automatic Speech Recognition with Word-level Timestamps (& Diarization)

Pros of WhisperX

- Improved word-level timestamps for more accurate alignment

- Faster processing times, especially for longer audio files

- Enhanced speaker diarization capabilities

Cons of WhisperX

- May require additional dependencies and setup compared to Whisper

- Potentially less stable due to being a third-party extension

- Could have compatibility issues with future Whisper updates

Code Comparison

Whisper:

import whisper

model = whisper.load_model("base")

result = model.transcribe("audio.mp3")

print(result["text"])

WhisperX:

import whisperx

model = whisperx.load_model("base")

result = model.transcribe("audio.mp3")

result = whisperx.align(result["segments"], model, "audio.mp3")

print(result["word_segments"])

The main difference in usage is that WhisperX provides an additional alignment step for more precise word-level timestamps. WhisperX also offers more detailed output with word segments, which can be beneficial for applications requiring fine-grained timing information.

Faster Whisper transcription with CTranslate2

Pros of faster-whisper

- Significantly faster inference times, especially on GPU

- Reduced memory usage, allowing for processing of longer audio files

- Support for CTranslate2, enabling optimized model execution

Cons of faster-whisper

- May have slightly lower accuracy compared to the original Whisper model

- Requires additional dependencies (CTranslate2) for optimal performance

- Less extensive documentation and community support

Code Comparison

Whisper:

import whisper

model = whisper.load_model("base")

result = model.transcribe("audio.mp3")

print(result["text"])

faster-whisper:

from faster_whisper import WhisperModel

model = WhisperModel("base", device="cuda", compute_type="float16")

segments, info = model.transcribe("audio.mp3", beam_size=5)

for segment in segments:

print("[%.2fs -> %.2fs] %s" % (segment.start, segment.end, segment.text))

Both repositories provide implementations of the Whisper automatic speech recognition model. While Whisper offers the original OpenAI implementation, faster-whisper focuses on optimizing performance and reducing resource requirements. The code comparison demonstrates the similar usage patterns, with faster-whisper providing more granular control over transcription parameters and output format.

High-performance GPGPU inference of OpenAI's Whisper automatic speech recognition (ASR) model

Pros of Whisper (Const-me)

- Optimized for Windows with DirectCompute, offering faster performance

- Supports real-time speech recognition

- Includes a GUI application for easier use

Cons of Whisper (Const-me)

- Limited to Windows platform

- May not support all features of the original OpenAI implementation

- Potentially less frequently updated compared to the official repository

Code Comparison

Whisper (OpenAI):

import whisper

model = whisper.load_model("base")

result = model.transcribe("audio.mp3")

print(result["text"])

Whisper (Const-me):

#include "whisper.h"

WHISPER_API struct whisper_context* whisper_init_from_file(const char* path_model);

WHISPER_API int whisper_full_default(struct whisper_context * ctx, struct whisper_full_params params, const float * samples, int n_samples);

The OpenAI version uses Python and provides a high-level API, while the Const-me version is implemented in C++ and offers lower-level access to the model's functionality.

Convert  designs to code with AI

designs to code with AI

Introducing Visual Copilot: A new AI model to turn Figma designs to high quality code using your components.

Try Visual CopilotREADME

Whisper

[Blog] [Paper] [Model card] [Colab example]

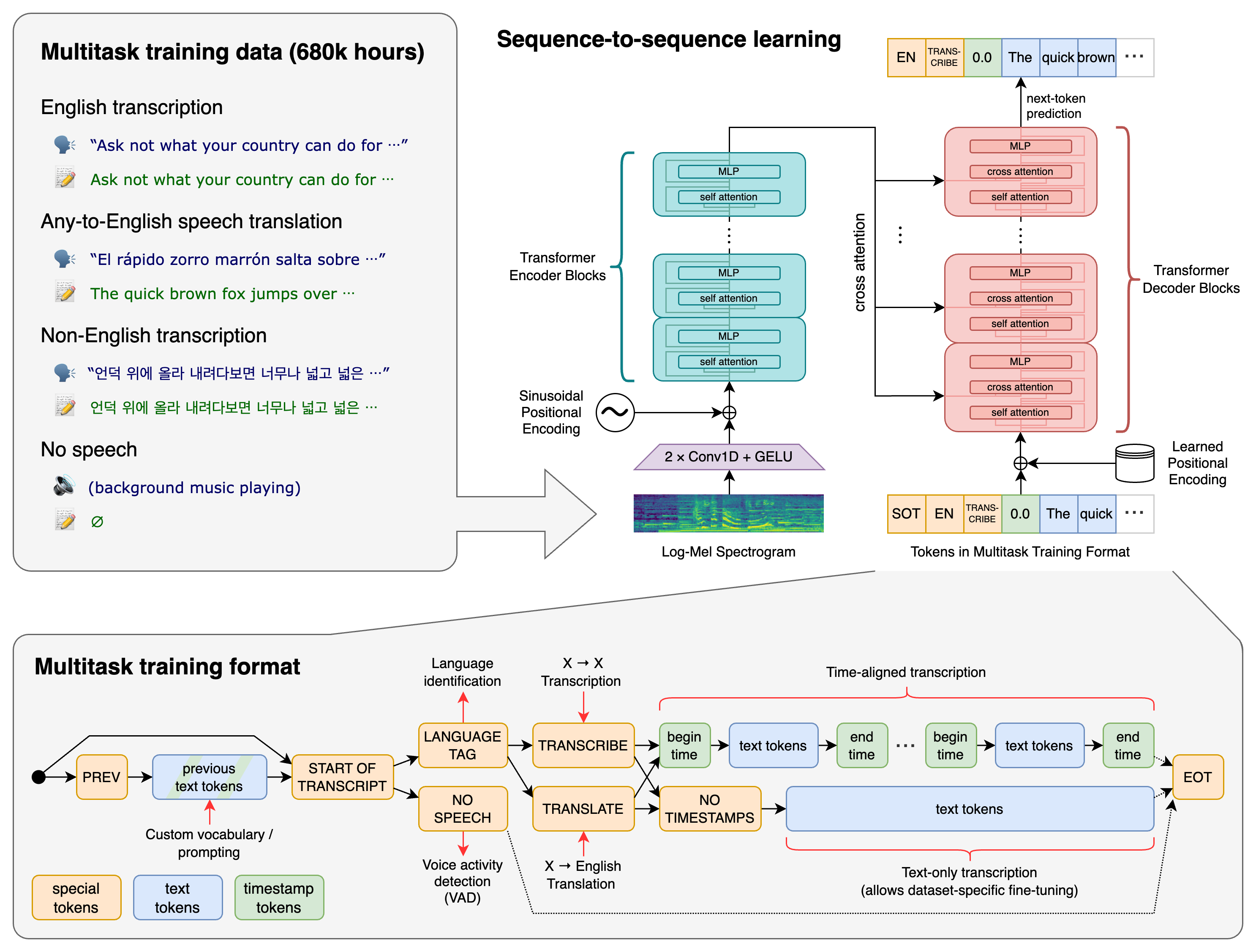

Whisper is a general-purpose speech recognition model. It is trained on a large dataset of diverse audio and is also a multitasking model that can perform multilingual speech recognition, speech translation, and language identification.

Approach

A Transformer sequence-to-sequence model is trained on various speech processing tasks, including multilingual speech recognition, speech translation, spoken language identification, and voice activity detection. These tasks are jointly represented as a sequence of tokens to be predicted by the decoder, allowing a single model to replace many stages of a traditional speech-processing pipeline. The multitask training format uses a set of special tokens that serve as task specifiers or classification targets.

Setup

We used Python 3.9.9 and PyTorch 1.10.1 to train and test our models, but the codebase is expected to be compatible with Python 3.8-3.11 and recent PyTorch versions. The codebase also depends on a few Python packages, most notably OpenAI's tiktoken for their fast tokenizer implementation. You can download and install (or update to) the latest release of Whisper with the following command:

pip install -U openai-whisper

Alternatively, the following command will pull and install the latest commit from this repository, along with its Python dependencies:

pip install git+https://github.com/openai/whisper.git

To update the package to the latest version of this repository, please run:

pip install --upgrade --no-deps --force-reinstall git+https://github.com/openai/whisper.git

It also requires the command-line tool ffmpeg to be installed on your system, which is available from most package managers:

# on Ubuntu or Debian

sudo apt update && sudo apt install ffmpeg

# on Arch Linux

sudo pacman -S ffmpeg

# on MacOS using Homebrew (https://brew.sh/)

brew install ffmpeg

# on Windows using Chocolatey (https://chocolatey.org/)

choco install ffmpeg

# on Windows using Scoop (https://scoop.sh/)

scoop install ffmpeg

You may need rust installed as well, in case tiktoken does not provide a pre-built wheel for your platform. If you see installation errors during the pip install command above, please follow the Getting started page to install Rust development environment. Additionally, you may need to configure the PATH environment variable, e.g. export PATH="$HOME/.cargo/bin:$PATH". If the installation fails with No module named 'setuptools_rust', you need to install setuptools_rust, e.g. by running:

pip install setuptools-rust

Available models and languages

There are six model sizes, four with English-only versions, offering speed and accuracy tradeoffs. Below are the names of the available models and their approximate memory requirements and inference speed relative to the large model. The relative speeds below are measured by transcribing English speech on a A100, and the real-world speed may vary significantly depending on many factors including the language, the speaking speed, and the available hardware.

| Size | Parameters | English-only model | Multilingual model | Required VRAM | Relative speed |

|---|---|---|---|---|---|

| tiny | 39 M | tiny.en | tiny | ~1 GB | ~10x |

| base | 74 M | base.en | base | ~1 GB | ~7x |

| small | 244 M | small.en | small | ~2 GB | ~4x |

| medium | 769 M | medium.en | medium | ~5 GB | ~2x |

| large | 1550 M | N/A | large | ~10 GB | 1x |

| turbo | 809 M | N/A | turbo | ~6 GB | ~8x |

The .en models for English-only applications tend to perform better, especially for the tiny.en and base.en models. We observed that the difference becomes less significant for the small.en and medium.en models.

Additionally, the turbo model is an optimized version of large-v3 that offers faster transcription speed with a minimal degradation in accuracy.

Whisper's performance varies widely depending on the language. The figure below shows a performance breakdown of large-v3 and large-v2 models by language, using WERs (word error rates) or CER (character error rates, shown in Italic) evaluated on the Common Voice 15 and Fleurs datasets. Additional WER/CER metrics corresponding to the other models and datasets can be found in Appendix D.1, D.2, and D.4 of the paper, as well as the BLEU (Bilingual Evaluation Understudy) scores for translation in Appendix D.3.

Command-line usage

The following command will transcribe speech in audio files, using the turbo model:

whisper audio.flac audio.mp3 audio.wav --model turbo

The default setting (which selects the turbo model) works well for transcribing English. However, the turbo model is not trained for translation tasks. If you need to translate non-English speech into English, use one of the multilingual models (tiny, base, small, medium, large) instead of turbo.

For example, to transcribe an audio file containing non-English speech, you can specify the language:

whisper japanese.wav --language Japanese

To translate speech into English, use:

whisper japanese.wav --model medium --language Japanese --task translate

Note: The

turbomodel will return the original language even if--task translateis specified. Usemediumorlargefor the best translation results.

Run the following to view all available options:

whisper --help

See tokenizer.py for the list of all available languages.

Python usage

Transcription can also be performed within Python:

import whisper

model = whisper.load_model("turbo")

result = model.transcribe("audio.mp3")

print(result["text"])

Internally, the transcribe() method reads the entire file and processes the audio with a sliding 30-second window, performing autoregressive sequence-to-sequence predictions on each window.

Below is an example usage of whisper.detect_language() and whisper.decode() which provide lower-level access to the model.

import whisper

model = whisper.load_model("turbo")

# load audio and pad/trim it to fit 30 seconds

audio = whisper.load_audio("audio.mp3")

audio = whisper.pad_or_trim(audio)

# make log-Mel spectrogram and move to the same device as the model

mel = whisper.log_mel_spectrogram(audio, n_mels=model.dims.n_mels).to(model.device)

# detect the spoken language

_, probs = model.detect_language(mel)

print(f"Detected language: {max(probs, key=probs.get)}")

# decode the audio

options = whisper.DecodingOptions()

result = whisper.decode(model, mel, options)

# print the recognized text

print(result.text)

More examples

Please use the ð Show and tell category in Discussions for sharing more example usages of Whisper and third-party extensions such as web demos, integrations with other tools, ports for different platforms, etc.

License

Whisper's code and model weights are released under the MIT License. See LICENSE for further details.

Top Related Projects

Port of OpenAI's Whisper model in C/C++

WhisperX: Automatic Speech Recognition with Word-level Timestamps (& Diarization)

Faster Whisper transcription with CTranslate2

High-performance GPGPU inference of OpenAI's Whisper automatic speech recognition (ASR) model

Convert  designs to code with AI

designs to code with AI

Introducing Visual Copilot: A new AI model to turn Figma designs to high quality code using your components.

Try Visual Copilot