Top Related Projects

This technique is designed to instance Characters(SkinnedMeshRender).

Special Effects with Skinned Mesh in Unity

Sharpmake is an open-source C#-based solution for generating project definition files, such as Visual Studio projects and solutions, GNU makefiles, Xcode projects, etc.

Animation Compression Library

Quick Overview

AI4Animation is a research project exploring the use of deep learning techniques for character animation and control. It focuses on developing neural networks that can generate realistic and responsive character movements for various applications, including games and simulations.

Pros

- Cutting-edge research in character animation using machine learning

- Provides implementations of various neural network architectures for animation

- Includes demos and examples for practical applications

- Open-source project with potential for community contributions

Cons

- Requires deep understanding of machine learning and character animation

- May have a steep learning curve for beginners

- Limited documentation for some components

- Might require significant computational resources for training and running models

Code Examples

# Loading a pre-trained model

from AI4Animation import NeuralNetwork

model = NeuralNetwork.load('path/to/pretrained_model.pth')

# Generating animation from input data

input_data = prepare_input_data() # Prepare your input data

animation = model.generate_animation(input_data)

# Fine-tuning the model on custom data

from AI4Animation import Trainer

trainer = Trainer(model)

trainer.train(custom_dataset, epochs=10, learning_rate=0.001)

Getting Started

-

Clone the repository:

git clone https://github.com/sebastianstarke/AI4Animation.git -

Install dependencies:

pip install -r requirements.txt -

Run a demo:

python demos/bipedal_locomotion.py -

Explore the documentation and examples in the repository to understand how to use the library for your specific animation needs.

Competitor Comparisons

This technique is designed to instance Characters(SkinnedMeshRender).

Pros of Animation-Instancing

- Focuses on performance optimization for rendering large numbers of animated characters

- Provides a solution for GPU-based animation instancing

- Integrates seamlessly with Unity's existing animation system

Cons of Animation-Instancing

- Limited to traditional animation techniques, lacking advanced AI-driven animations

- May not provide the same level of realistic character movement as AI4Animation

- Less suitable for complex, dynamic character interactions

Code Comparison

Animation-Instancing:

public class AnimationInstancing : MonoBehaviour

{

public AnimationClip[] animationClips;

private AnimationInstancingMgr instancingManager;

}

AI4Animation:

public class NeuralNetwork : MonoBehaviour

{

public NeuralNetworkModel model;

private TensorFlow.Session session;

}

The Animation-Instancing code focuses on managing animation clips for instancing, while AI4Animation utilizes neural network models for generating animations. AI4Animation's approach allows for more dynamic and adaptable character movements, potentially at the cost of higher computational requirements. Animation-Instancing, on the other hand, prioritizes efficient rendering of multiple animated instances, which can be beneficial for large-scale scenes with many characters.

Special Effects with Skinned Mesh in Unity

Pros of Skinner

- Focuses on real-time mesh deformation and skinning techniques

- Provides a lightweight solution for Unity-based projects

- Offers visual effects like vertex displacement and tessellation

Cons of Skinner

- Limited to mesh manipulation and doesn't cover character animation

- Lacks machine learning or AI-driven animation capabilities

- May require more manual setup for complex character rigs

Code Comparison

Skinner (Unity C#):

[RequireComponent(typeof(MeshFilter))]

public class SkinnedMeshDeformer : MonoBehaviour

{

public float deformAmount = 1f;

private Mesh deformingMesh;

private Vector3[] originalVertices, displacedVertices;

}

AI4Animation (Python with TensorFlow):

class PFNN:

def __init__(self, xDim, hDim, yDim):

self.xDim = xDim

self.hDim = hDim

self.yDim = yDim

self.W = tf.Variable(tf.random_normal([hDim, xDim + hDim + yDim]))

While Skinner focuses on mesh deformation within Unity, AI4Animation utilizes machine learning techniques for character animation, offering different approaches to animation challenges in game development and computer graphics.

Sharpmake is an open-source C#-based solution for generating project definition files, such as Visual Studio projects and solutions, GNU makefiles, Xcode projects, etc.

Pros of Sharpmake

- Focused on build system generation, providing a more specialized tool for project management

- Supports multiple platforms and build configurations out of the box

- Actively maintained by Ubisoft with regular updates and improvements

Cons of Sharpmake

- Steeper learning curve due to its domain-specific language

- Limited to C# for scripting, whereas AI4Animation supports multiple languages

- Less versatile in terms of application domains compared to AI4Animation's broad focus on animation

Code Comparison

Sharpmake (C#):

[Generate]

public class MyProject : Project

{

public MyProject()

{

Name = "MyProject";

SourceRootPath = @"[project.SharpmakeCsPath]\codebase";

}

}

AI4Animation (Python):

class NeuralNetwork(nn.Module):

def __init__(self, input_size, hidden_size, output_size):

super(NeuralNetwork, self).__init__()

self.hidden = nn.Linear(input_size, hidden_size)

self.output = nn.Linear(hidden_size, output_size)

Animation Compression Library

Pros of ACL

- Focused on animation compression, offering high-performance and memory-efficient solutions

- Provides cross-platform support and integration with popular game engines

- Actively maintained with regular updates and improvements

Cons of ACL

- Limited to animation compression, lacking the broader AI-driven animation features of AI4Animation

- May require more manual setup and integration compared to AI4Animation's Unity-based approach

- Less suitable for real-time, AI-driven character animation

Code Comparison

ACL (C++):

acl::compression_settings settings;

settings.level = acl::compression_level8::medium;

acl::output_stats stats;

acl::compress_clip(allocator, clip, settings, compressed_data, stats);

AI4Animation (C#):

NeuralNetwork network = new NeuralNetwork();

network.Setup();

Vector3 prediction = network.Predict(input);

character.ApplyPrediction(prediction);

Both repositories focus on animation-related tasks, but ACL specializes in compression while AI4Animation emphasizes AI-driven animation generation. ACL is more suitable for optimizing animation storage and playback, while AI4Animation excels in creating dynamic, intelligent character animations.

Convert  designs to code with AI

designs to code with AI

Introducing Visual Copilot: A new AI model to turn Figma designs to high quality code using your components.

Try Visual CopilotREADME

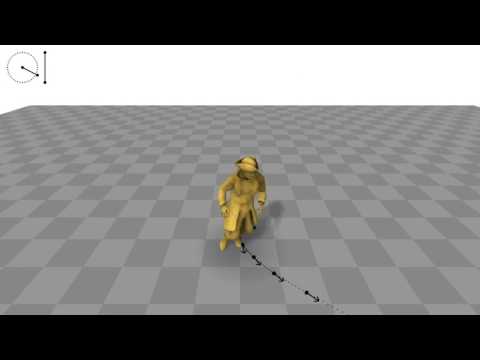

AI4Animation: Deep Learning for Character Control

This repository explores the opportunities of deep learning for character animation and control. It aims to be a comprehensive framework for data-driven character animation, including data processing, neural network training and runtime control, developed in Unity3D / PyTorch. The various projects below demonstrate such capabilities using neural networks for animating biped locomotion, quadruped locomotion, and character-scene interactions with objects and the environment, plus sports and fighting games, as well as embodied avatar motions in AR/VR. Further advances on this research will continue being added to this project.

SIGGRAPH 2024

Categorical Codebook Matching for Embodied Character Controllers

Sebastian Starke,

Paul Starke,

Nicky He,

Taku Komura,

Yuting Ye,

ACM Trans. Graph. 43, 4, Article 142.

Translating motions from a real user onto a virtual embodied avatar is a key challenge for character animation in the metaverse. In this work, we present a novel generative framework that enables mapping from a set of sparse sensor signals to a full body avatar motion in real-time while faithfully preserving the motion context of the user. In contrast to existing techniques that require training a motion prior and its mapping from control to motion separately, our framework is able to learn the motion manifold as well as how to sample from it at the same time in an end-to-end manner. To achieve that, we introduce a technique called codebook matching which matches the probability distribution between two categorical codebooks for the inputs and outputs for synthesizing the character motions. We demonstrate this technique can successfully handle ambiguity in motion generation and produce high quality character controllers from unstructured motion capture data. Our method is especially useful for interactive applications like virtual reality or video games where high accuracy and responsiveness are needed.

- Video - Paper - Dataset - Code - VR Demo - Windows Demo - Mac Demo - ReadMe -

Unlike existing methods for kinematic character control that learn a direct mapping between inputs and outputs or utilize a motion prior that is trained on the motion data alone, our framework learns from both the inputs and outputs simultaneously to form a motion manifold that is informed about the control signals. To learn such setup in a supervised manner, we propose a technique that we call Codebook Matching which enforces similarity between both latent probability distributions $Z_ð$ and $Z_ð$. In the context of motion generation, instead of directly predicting the motions outputs from the control inputs, we only predict their probabilities for each of them to appear. By introducing a matching loss between both categorical probability distributions, our codebook matching technique allows to substitute $Z_ð$ by $Z_ð$ during test time.

Training:

\begin{cases}

Y \rightarrow Z_Y \rightarrow Y

\\

X \rightarrow Z_X

\\

Z_X \sim Z_Y

\end{cases}

Inference:

X \rightarrow Z_X \rightarrow Y

Our method is not limited to three-point inputs but we can also use it to generate embodied character movements with additional joystick or button controls by what we call hybrid control mode. In this setting, the user, engineer or artist can additionally tell the character where to go via a simple goal location while preserving the original context of motion from three-point tracking signals. This changes the scope of applications we can address by walking / running / crouching in the virtual world while standing or even sitting in the real world.

Furthermore, our codebook matching architecture shares many similarities with motion matching and is able to learn a similar structure in an end-to-end manner. While motion matching can bypass ambiguity in the mapping from control to motion by selecting among candidates with similar query distances, our setup selects possible outcomes from predicted probabilities and naturally projects against valid output motions if their probabilities are similar. However, in contrast to database searches, our codebook matching is able to effectively compress the motion data where same motions map to same codes, and can bypass ambiguity issues which existing learning-based methods such as standard feed-forward networks (MLP) or variational models (CVAE) may struggle with. We demonstrate such capabilities by reconstructing the ambiguous toy example functions in the figure below.

SIGGRAPH 2022

DeepPhase: Periodic Autoencoders for Learning Motion Phase Manifolds

Sebastian Starke,

Ian Mason,

Taku Komura,

ACM Trans. Graph. 41, 4, Article 136.

Learning the spatial-temporal structure of body movements is a fundamental problem for character motion synthesis. In this work, we propose a novel neural network architecture called the Periodic Autoencoder that can learn periodic features from large unstructured motion datasets in an unsupervised manner. The character movements are decomposed into multiple latent channels that capture the non-linear periodicity of different body segments while progressing forward in time. Our method extracts a multi-dimensional phase space from full-body motion data, which effectively clusters animations and produces a manifold in which computed feature distances provide a better similarity measure than in the original motion space to achieve better temporal and spatial alignment. We demonstrate that the learned periodic embedding can significantly help to improve neural motion synthesis in a number of tasks, including diverse locomotion skills, style-based movements, dance motion synthesis from music, synthesis of dribbling motions in football, and motion query for matching poses within large animation databases.

- Video - Paper - PAE Code & Demo - Animation Code & Demo - Explanation and Addendum - Tutorial -

- Motion In-Betweening System -

SIGGRAPH 2021

Neural Animation Layering for Synthesizing Martial Arts Movements

Sebastian Starke,

Yiwei Zhao,

Fabio Zinno,

Taku Komura,

ACM Trans. Graph. 40, 4, Article 92.

Interactively synthesizing novel combinations and variations of character movements from different motion skills is a key problem in computer animation. In this research, we propose a deep learning framework to produce a large variety of martial arts movements in a controllable manner from raw motion capture data. Our method imitates animation layering using neural networks with the aim to overcome typical challenges when mixing, blending and editing movements from unaligned motion sources. The system can be used for offline and online motion generation alike, provides an intuitive interface to integrate with animator workflows, and is relevant for real-time applications such as computer games.

SIGGRAPH 2020

Local Motion Phases for Learning Multi-Contact Character Movements

Sebastian Starke,

Yiwei Zhao,

Taku Komura,

Kazi Zaman.

ACM Trans. Graph. 39, 4, Article 54.

Not sure how to align complex character movements? Tired of phase labeling? Unclear how to squeeze everything into a single phase variable? Don't worry, a solution exists!

Controlling characters to perform a large variety of dynamic, fast-paced and quickly changing movements is a key challenge in character animation. In this research, we present a deep learning framework to interactively synthesize such animations in high quality, both from unstructured motion data and without any manual labeling. We introduce the concept of local motion phases, and show our system being able to produce various motion skills, such as ball dribbling and professional maneuvers in basketball plays, shooting, catching, avoidance, multiple locomotion modes as well as different character and object interactions, all generated under a unified framework.

- Video - Paper - Code - Windows Demo - ReadMe -

SIGGRAPH Asia 2019

Neural State Machine for Character-Scene Interactions

Sebastian Starke+,

He Zhang+,

Taku Komura,

Jun Saito.

ACM Trans. Graph. 38, 6, Article 178.

(+Joint First Authors)

Animating characters can be an easy or difficult task - interacting with objects is one of the latter. In this research, we present the Neural State Machine, a data-driven deep learning framework for character-scene interactions. The difficulty in such animations is that they require complex planning of periodic as well as aperiodic movements to complete a given task. Creating them in a production-ready quality is not straightforward and often very time-consuming. Instead, our system can synthesize different movements and scene interactions from motion capture data, and allows the user to seamlessly control the character in real-time from simple control commands. Since our model directly learns from the geometry, the motions can naturally adapt to variations in the scene. We show that our system can generate a large variety of movements, icluding locomotion, sitting on chairs, carrying boxes, opening doors and avoiding obstacles, all from a single model. The model is responsive, compact and scalable, and is the first of such frameworks to handle scene interaction tasks for data-driven character animation.

- Video - Paper - Code & Demo - Mocap Data - ReadMe -

SIGGRAPH 2018

Mode-Adaptive Neural Networks for Quadruped Motion Control

He Zhang+,

Sebastian Starke+,

Taku Komura,

Jun Saito.

ACM Trans. Graph. 37, 4, Article 145.

(+Joint First Authors)

Animating characters can be a pain, especially those four-legged monsters! This year, we will be presenting our recent research on quadruped animation and character control at the SIGGRAPH 2018 in Vancouver. The system can produce natural animations from real motion data using a novel neural network architecture, called Mode-Adaptive Neural Networks. Instead of optimising a fixed group of weights, the system learns to dynamically blend a group of weights into a further neural network, based on the current state of the character. That said, the system does not require labels for the phase or locomotion gaits, but can learn from unstructured motion capture data in an end-to-end fashion.

- Video - Paper - Code - Mocap Data - Windows Demo - Linux Demo - Mac Demo - ReadMe -

SIGGRAPH 2017

Phase-Functioned Neural Networks for Character Control

Daniel Holden,

Taku Komura,

Jun Saito.

ACM Trans. Graph. 36, 4, Article 42.

This work continues the recent work on PFNN (Phase-Functioned Neural Networks) for character control. A demo in Unity3D using the original weights for terrain-adaptive locomotion is contained in the Assets/Demo/SIGGRAPH_2017/Original folder. Another demo on flat ground using the Adam character is contained in the Assets/Demo/SIGGRAPH_2017/Adam folder. In order to run them, you need to download the neural network weights from the link provided in the Link.txt file, extract them into the /NN folder, and store the parameters via the custom inspector button.

- Video - Paper - Code (Unity) - Windows Demo - Linux Demo - Mac Demo -

Thesis Fast Forward Presentation from SIGGRAPH 2020

Copyright Information

This project is only for research or education purposes, and not freely available for commercial use or redistribution. The motion capture data is available only under the terms of the Attribution-NonCommercial 4.0 International (CC BY-NC 4.0) license.

Top Related Projects

This technique is designed to instance Characters(SkinnedMeshRender).

Special Effects with Skinned Mesh in Unity

Sharpmake is an open-source C#-based solution for generating project definition files, such as Visual Studio projects and solutions, GNU makefiles, Xcode projects, etc.

Animation Compression Library

Convert  designs to code with AI

designs to code with AI

Introducing Visual Copilot: A new AI model to turn Figma designs to high quality code using your components.

Try Visual Copilot