Awesome-Prompt-Engineering

Awesome-Prompt-Engineering

This repository contains a hand-curated resources for Prompt Engineering with a focus on Generative Pre-trained Transformer (GPT), ChatGPT, PaLM etc

Top Related Projects

🐙 Guides, papers, lecture, notebooks and resources for prompt engineering

A library for helping developers craft prompts for Large Language Models

This repo includes ChatGPT prompt curation to use ChatGPT and other LLM tools better.

Examples and guides for using the OpenAI API

Prompt Engineering, Generative AI, and LLM Guide by Learn Prompting | Join our discord for the largest Prompt Engineering learning community

A curated list of awesome resources, tools, and other shiny things for LLM prompt engineering.

Quick Overview

Awesome-Prompt-Engineering is a curated list of resources, tools, and techniques for prompt engineering in AI and machine learning. It serves as a comprehensive guide for developers, researchers, and enthusiasts interested in leveraging prompts to enhance AI model performance and capabilities.

Pros

- Extensive collection of prompt engineering resources in one place

- Regularly updated with new tools, techniques, and research papers

- Well-organized structure for easy navigation and discovery

- Includes both theoretical concepts and practical applications

Cons

- May be overwhelming for beginners due to the vast amount of information

- Some listed resources might become outdated over time

- Lacks in-depth explanations or tutorials for each listed item

- Primarily focuses on English-language resources, potentially limiting its global reach

Getting Started

As this is not a code library but a curated list of resources, there is no code-based getting started guide. However, users can begin exploring the repository by following these steps:

- Visit the GitHub repository: promptslab/Awesome-Prompt-Engineering

- Browse through the table of contents to find topics of interest

- Click on the links to access specific resources, tools, or research papers

- Star the repository to keep track of updates and new additions

- Consider contributing to the project by submitting pull requests for new resources or improvements

Competitor Comparisons

🐙 Guides, papers, lecture, notebooks and resources for prompt engineering

Pros of Prompt-Engineering-Guide

- More comprehensive and structured content, covering a wide range of topics in prompt engineering

- Includes practical examples and code snippets for various use cases

- Regularly updated with new techniques and best practices

Cons of Prompt-Engineering-Guide

- May be overwhelming for beginners due to its extensive content

- Focuses more on theoretical concepts and less on curated resources

Code Comparison

Prompt-Engineering-Guide:

prompt = f"""

Translate the following English text to French:

```{text}```

"""

response = get_completion(prompt)

print(response)

Awesome-Prompt-Engineering:

# No specific code examples provided in the repository

Summary

Prompt-Engineering-Guide offers a more in-depth and structured approach to learning prompt engineering, with practical examples and regular updates. However, it may be more suitable for intermediate to advanced users. Awesome-Prompt-Engineering, on the other hand, serves as a curated list of resources, making it easier for beginners to find relevant information quickly. The choice between the two repositories depends on the user's learning style and level of expertise in prompt engineering.

A library for helping developers craft prompts for Large Language Models

Pros of prompt-engine

- Focused on providing a structured framework for prompt engineering

- Offers a more comprehensive set of tools and utilities for prompt development

- Includes features for prompt testing and evaluation

Cons of prompt-engine

- Less community-driven content compared to Awesome-Prompt-Engineering

- May have a steeper learning curve for beginners

- More limited in scope, focusing primarily on Microsoft's approach to prompt engineering

Code Comparison

prompt-engine:

from promptengine import PromptTemplate

template = PromptTemplate("Summarize the following text: {text}")

prompt = template.format(text="Long article content here...")

Awesome-Prompt-Engineering:

# No specific code examples provided in the repository

# Focuses on curating resources and best practices

Summary

prompt-engine is a more structured and comprehensive tool for prompt engineering, offering a framework and utilities for development and testing. Awesome-Prompt-Engineering, on the other hand, serves as a curated list of resources and best practices, making it more accessible for beginners but less feature-rich in terms of actual prompt development tools.

This repo includes ChatGPT prompt curation to use ChatGPT and other LLM tools better.

Pros of awesome-chatgpt-prompts

- Extensive collection of ready-to-use prompts for various scenarios

- Well-organized with clear categories and descriptions

- Active community contributing new prompts regularly

Cons of awesome-chatgpt-prompts

- Focused primarily on ChatGPT, limiting its applicability to other models

- Lacks in-depth explanations of prompt engineering techniques

- Minimal information on prompt optimization and best practices

Code Comparison

Awesome-Prompt-Engineering:

## Prompt Engineering Techniques

- Chain-of-Thought Prompting

- Few-Shot Prompting

- Zero-Shot Prompting

awesome-chatgpt-prompts:

# Prompts

## Act as a Linux Terminal

I want you to act as a linux terminal. I will type commands and you will reply with what the terminal should show...

The code snippets highlight the different focus areas of the repositories. Awesome-Prompt-Engineering provides a structured list of prompt engineering techniques, while awesome-chatgpt-prompts offers specific, ready-to-use prompts for various scenarios.

Examples and guides for using the OpenAI API

Pros of openai-cookbook

- Provides practical, hands-on examples and code snippets for working with OpenAI's APIs

- Regularly updated with new features and best practices from OpenAI

- Offers in-depth explanations and tutorials for various use cases

Cons of openai-cookbook

- Focuses primarily on OpenAI's products, limiting its scope compared to Awesome-Prompt-Engineering

- May not cover as wide a range of prompt engineering techniques and strategies

- Less community-driven content and contributions

Code Comparison

openai-cookbook:

import openai

response = openai.Completion.create(

engine="text-davinci-002",

prompt="Translate the following English text to French: '{}'",

max_tokens=60

)

Awesome-Prompt-Engineering:

# Translation Prompt

Translate the following English text to French:

[Insert text here]

The openai-cookbook provides specific code implementation, while Awesome-Prompt-Engineering focuses on prompt templates and strategies that can be applied across various language models and platforms.

Prompt Engineering, Generative AI, and LLM Guide by Learn Prompting | Join our discord for the largest Prompt Engineering learning community

Pros of Learn_Prompting

- More structured and comprehensive learning approach

- Includes interactive exercises and quizzes

- Offers a clear progression path for learners

Cons of Learn_Prompting

- Less frequently updated compared to Awesome-Prompt-Engineering

- Focuses primarily on text-based prompts, with less coverage of multimodal prompting

- May not include the latest cutting-edge techniques as quickly

Code Comparison

Learn_Prompting:

def generate_prompt(topic, style):

return f"Write a {style} essay about {topic}."

prompt = generate_prompt("climate change", "persuasive")

Awesome-Prompt-Engineering:

from transformers import pipeline

generator = pipeline('text-generation', model='gpt2')

prompt = "Explain the concept of prompt engineering:"

response = generator(prompt, max_length=100)

While Learn_Prompting focuses on teaching prompt construction techniques, Awesome-Prompt-Engineering provides more diverse examples and resources for implementing prompt engineering in various contexts. Learn_Prompting is better suited for beginners looking for a structured learning path, while Awesome-Prompt-Engineering serves as a comprehensive resource hub for practitioners at all levels.

A curated list of awesome resources, tools, and other shiny things for LLM prompt engineering.

Pros of awesome-gpt-prompt-engineering

- More focused on practical applications and use cases for GPT prompt engineering

- Includes a section on prompt engineering tools and resources

- Provides examples of prompts for specific tasks and industries

Cons of awesome-gpt-prompt-engineering

- Less comprehensive coverage of academic research and theoretical aspects

- Fewer links to external resources and articles

- Smaller community engagement (fewer stars and contributors)

Code comparison

While both repositories primarily consist of curated lists and don't contain significant code samples, here's a comparison of their README structures:

awesome-gpt-prompt-engineering:

# Awesome GPT Prompt Engineering

## Table of Contents

- [Introduction](#introduction)

- [Prompt Engineering Techniques](#prompt-engineering-techniques)

- [Use Cases](#use-cases)

Awesome-Prompt-Engineering:

# Awesome Prompt Engineering

[](https://awesome.re)

A curated list of awesome resources for prompt engineering

## Contents

- [Prompt Engineering](#prompt-engineering)

- [Courses](#courses)

Both repositories use similar Markdown structures, but Awesome-Prompt-Engineering includes an "Awesome" badge and has a more extensive table of contents.

Convert  designs to code with AI

designs to code with AI

Introducing Visual Copilot: A new AI model to turn Figma designs to high quality code using your components.

Try Visual CopilotREADME

Awesome Prompt Engineering ð§ââï¸

This repository contains a hand-curated resources for Prompt Engineering with a focus on Generative Pre-trained Transformer (GPT), ChatGPT, PaLM etc

Prompt Engineering Course is coming soon..

Prompt Engineering Course is coming soon..

Table of Contents

- Papers

- Tools & Code

- Apis

- Datasets

- Models

- AI Content Detectors

- Educational

- Videos

- Books

- Communities

- How to Contribute

Papers

ð

-

Prompt Engineering Techniques:

-

Text Mining for Prompt Engineering: Text-Augmented Open Knowledge Graph Completion via PLMs [2023] (ACL)

-

A Prompt Pattern Catalog to Enhance Prompt Engineering with ChatGPT [2023] (Arxiv)

-

Hard Prompts Made Easy: Gradient-Based Discrete Optimization for Prompt Tuning and Discovery [2023] (Arxiv)

-

Synthetic Prompting: Generating Chain-of-Thought Demonstrations for Large Language Models [2023] (Arxiv)

-

Progressive Prompts: Continual Learning for Language Models [2023] (Arxiv)

-

Batch Prompting: Efficient Inference with LLM APIs [2023] (Arxiv)

-

Successive Prompting for Decompleting Complex Questions [2022] (Arxiv)

-

Structured Prompting: Scaling In-Context Learning to 1,000 Examples [2022] (Arxiv)

-

Large Language Models Are Human-Level Prompt Engineers [2022] (Arxiv)

-

Ask Me Anything: A simple strategy for prompting language models [2022] (Arxiv)

-

Decomposed Prompting: A Modular Approach for Solving Complex Tasks [2022] (Arxiv)

-

PromptChainer: Chaining Large Language Model Prompts through Visual Programming [2022] (Arxiv)

-

Investigating Prompt Engineering in Diffusion Models [2022] (Arxiv)

-

Show Your Work: Scratchpads for Intermediate Computation with Language Models [2021] (Arxiv)

-

Reframing Instructional Prompts to GPTk's Language [2021] (Arxiv)

-

Fantastically Ordered Prompts and Where to Find Them: Overcoming Few-Shot Prompt Order Sensitivity [2021] (Arxiv)

-

The Power of Scale for Parameter-Efficient Prompt Tuning [2021] (Arxiv)

-

Prompt Programming for Large Language Models: Beyond the Few-Shot Paradigm [2021] (Arxiv)

-

Prefix-Tuning: Optimizing Continuous Prompts for Generation [2021] (Arxiv)

-

-

Reasoning and In-Context Learning:

-

Multimodal Chain-of-Thought Reasoning in Language Models [2023] (Arxiv)

-

On Second Thought, Let's Not Think Step by Step! Bias and Toxicity in Zero-Shot Reasoning [2022] (Arxiv)

-

ReAct: Synergizing Reasoning and Acting in Language Models [2022] (Arxiv)

-

Language Models Are Greedy Reasoners: A Systematic Formal Analysis of Chain-of-Thought [2022] (Arxiv)

-

On the Advance of Making Language Models Better Reasoners [2022] (Arxiv)

-

Large Language Models are Zero-Shot Reasoners [2022] (Arxiv)

-

Reasoning Like Program Executors [2022] (Arxiv)

-

Self-Consistency Improves Chain of Thought Reasoning in Language Models [2022] (Arxiv)

-

Rethinking the Role of Demonstrations: What Makes In-Context Learning Work? [2022] (Arxiv)

-

Learn to Explain: Multimodal Reasoning via Thought Chains for Science Question Answering [2022] (Arxiv)

-

Chain of Thought Prompting Elicits Reasoning in Large Language Models [2021] (Arxiv)

-

Generated Knowledge Prompting for Commonsense Reasoning [2021] (Arxiv)

-

BERTese: Learning to Speak to BERT [2021] (Acl)

-

-

Evaluating and Improving Language Models:

-

Large Language Models Can Be Easily Distracted by Irrelevant Context [2023] (Arxiv)

-

Crawling the Internal Knowledge-Base of Language Models [2023] (Arxiv)

-

Discovering Language Model Behaviors with Model-Written Evaluations [2022] (Arxiv)

-

Calibrate Before Use: Improving Few-Shot Performance of Language Models [2021] (Arxiv)

-

-

Applications of Language Models:

-

Rephrase and Respond: Let Large Language Models Ask Better Questions for Themselves [2023] (Arxiv)

-

Prompting for Multimodal Hateful Meme Classification [2023] (Arxiv)

-

PLACES: Prompting Language Models for Social Conversation Synthesis [2023] (Arxiv)

-

Commonsense-Aware Prompting for Controllable Empathetic Dialogue Generation [2023] (Arxiv)

-

Legal Prompt Engineering for Multilingual Legal Judgement Prediction [2023] (Arxiv)

-

Conversing with Copilot: Exploring Prompt Engineering for Solving CS1 Problems Using Natural Language [2022] (Arxiv)

-

Plot Writing From Scratch Pre-Trained Language Models [2022] (Acl)

-

AutoPrompt: Eliciting Knowledge from Language Models with Automatically Generated Prompts [2020] (Arxiv)

-

-

Threat Detection and Adversarial Examples:

-

Constitutional AI: Harmlessness from AI Feedback [2022] (Arxiv)

-

Ignore Previous Prompt: Attack Techniques For Language Models [2022] (Arxiv)

-

Machine Generated Text: A Comprehensive Survey of Threat Models and Detection Methods [2022] (Arxiv)

-

Evaluating the Susceptibility of Pre-Trained Language Models via Handcrafted Adversarial Examples [2022] (Arxiv)

-

Toxicity Detection with Generative Prompt-based Inference [2022] (Arxiv)

-

How Can We Know What Language Models Know? [2020] (Mit)

-

-

Few-shot Learning and Performance Optimization:

-

Promptagator: Few-shot Dense Retrieval From 8 Examples [2022] (Arxiv)

-

The Unreliability of Explanations in Few-shot Prompting for Textual Reasoning [2022] (Arxiv)

-

Making Pre-trained Language Models Better Few-shot Learners [2021] (Acl)

-

Language Models are Few-Shot Learners [2020] (Arxiv)

-

-

Text to Image Generation:

- A Taxonomy of Prompt Modifiers for Text-To-Image Generation [2022] (Arxiv)

- Design Guidelines for Prompt Engineering Text-to-Image Generative Models [2021] (Arxiv)

- High-Resolution Image Synthesis with Latent Diffusion Models [2021] (Arxiv)

- DALL·E: Creating Images from Text [2021] (Arxiv)

-

Text to Music/Sound Generation:

- MusicLM: Generating Music From Text [2023] (Arxiv)

- ERNIE-Music: Text-to-Waveform Music Generation with Diffusion Models [2023] (Arxiv)

- Noise2Music: Text-conditioned Music Generation with Diffusion Models [2023) (Arxiv)

- AudioLM: a Language Modeling Approach to Audio Generation [2023] (Arxiv)

- Make-An-Audio: Text-To-Audio Generation with Prompt-Enhanced Diffusion Models [2023] (Arxiv)

-

Text to Video Generation:

-

Dreamix: Video Diffusion Models are General Video Editors [2023] (Arxiv)

-

Tune-A-Video: One-Shot Tuning of Image Diffusion Models for Text-to-Video Generation [2022] (Arxiv)

-

Noise2Music: Text-conditioned Music Generation with Diffusion Models [2023) (Arxiv)

-

AudioLM: a Language Modeling Approach to Audio Generation [2023] (Arxiv)

-

-

Overviews:

Tools & Code

ð§

| Name | Description | Url |

|---|---|---|

| LlamaIndex | LlamaIndex is a project consisting of a set of data structures designed to make it easier to use large external knowledge bases with LLMs. | [Github] |

| Promptify | Solve NLP Problems with LLM's & Easily generate different NLP Task prompts for popular generative models like GPT, PaLM, and more with Promptify | [Github] |

| Arize-Phoenix | Open-source tool for ML observability that runs in your notebook environment. Monitor and fine tune LLM, CV and Tabular Models. | [Github] |

| Better Prompt | Test suite for LLM prompts before pushing them to PROD | [Github] |

| CometLLM | Log, visualize, and evaluate your LLM prompts, prompt templates, prompt variables, metadata, and more. | [Github] |

| Embedchain | Framework to create ChatGPT like bots over your dataset | [Github] |

| Interactive Composition Explorerx | ICE is a Python library and trace visualizer for language model programs. | [Github] |

| Haystack | Open source NLP framework to interact with your data using LLMs and Transformers. | [Github] |

| LangChainx | Building applications with LLMs through composability | [Github] |

| OpenPrompt | An Open-Source Framework for Prompt-learning | [Github] |

| Prompt Engine | This repo contains an NPM utility library for creating and maintaining prompts for Large Language Models (LLMs). | [Github] |

| PromptInject | PromptInject is a framework that assembles prompts in a modular fashion to provide a quantitative analysis of the robustness of LLMs to adversarial prompt attacks. | [Github] |

| Prompts AI | Advanced playground for GPT-3 | [Github] |

| Prompt Source | PromptSource is a toolkit for creating, sharing and using natural language prompts. | [Github] |

| ThoughtSource | A framework for the science of machine thinking | [Github] |

| PROMPTMETHEUS | One-shot Prompt Engineering Toolkit | [Tool] |

| AI Config | An Open-Source configuration based framework for building applications with LLMs | [Github] |

| LastMile AI | Notebook-like playground for interacting with LLMs across different modalities (text, speech, audio, image) | [Tool] |

| XpulsAI | Effortlessly build scalable AI Apps. AutoOps platform for AI & ML | [Tool] |

| Agenta | Agenta is an open-source LLM developer platform with the tools for prompt management, evaluation, human feedback, and deployment all in one place. | [Github] |

| Promptotype | Develop, test, and monitor your LLM { structured } tasks | [Tool] |

Apis

ð»

| Name | Description | Url | Paid or Open-Source |

|---|---|---|---|

| OpenAI | GPT-n for natural language tasks, Codex for translates natural language to code, and DALL·E for creates and edits original images | [OpenAI] | Paid |

| CohereAI | Cohere provides access to advanced Large Language Models and NLP tools through one API | [CohereAI] | Paid |

| Anthropic | Coming soon | [Anthropic] | Paid |

| FLAN-T5 XXL | Coming soon | [HuggingFace] | Open-Source |

Datasets

ð¾

| Name | Description | Url |

|---|---|---|

| P3 (Public Pool of Prompts) | P3 (Public Pool of Prompts) is a collection of prompted English datasets covering a diverse set of NLP tasks. | [HuggingFace] |

| Awesome ChatGPT Prompts | Repo includes ChatGPT prompt curation to use ChatGPT better. | [Github] |

| Writing Prompts | Collection of a large dataset of 300K human-written stories paired with writing prompts from an online forum(reddit) | [Kaggle] |

| Midjourney Prompts | Text prompts and image URLs scraped from MidJourney's public Discord server | [HuggingFace] |

Models

ð§

| Name | Description | Url |

|---|---|---|

| ChatGPT | ChatGPT | [OpenAI] |

| Codex | The Codex models are descendants of our GPT-3 models that can understand and generate code. Their training data contains both natural language and billions of lines of public code from GitHub | [Github] |

| Bloom | BigScience Large Open-science Open-access Multilingual Language Model | [HuggingFace] |

| Facebook LLM | OPT-175B is a GPT-3 equivalent model trained by Meta. It is by far the largest pretrained language model available with 175 billion parameters. | [Alpa] |

| GPT-NeoX | GPT-NeoX-20B, a 20 billion parameter autoregressive language model trained on the Pile | [HuggingFace] |

| FLAN-T5 XXL | Flan-T5 is an instruction-tuned model, meaning that it exhibits zero-shot-like behavior when given instructions as part of the prompt. | [HuggingFace/Google] |

| XLM-RoBERTa-XL | XLM-RoBERTa-XL model pre-trained on 2.5TB of filtered CommonCrawl data containing 100 languages. | [HuggingFace] |

| GPT-J | It is a GPT-2-like causal language model trained on the Pile dataset | [HuggingFace] |

| PaLM-rlhf-pytorch | Implementation of RLHF (Reinforcement Learning with Human Feedback) on top of the PaLM architecture. Basically ChatGPT but with PaLM | [Github] |

| GPT-Neo | An implementation of model parallel GPT-2 and GPT-3-style models using the mesh-tensorflow library. | [Github] |

| LaMDA-rlhf-pytorch | Open-source pre-training implementation of Google's LaMDA in PyTorch. Adding RLHF similar to ChatGPT. | [Github] |

| RLHF | Implementation of Reinforcement Learning from Human Feedback (RLHF) | [Github] |

| GLM-130B | GLM-130B: An Open Bilingual Pre-Trained Model | [Github] |

| Mixtral-84B | Mixtral-84B is a Mixture of Expert (MOE) model with 8 experts per MLP. | [HuggingFace] |

AI Content Detectors

ð

| Name | Description | Url |

|---|---|---|

| AI Text Classifier | The AI Text Classifier is a fine-tuned GPT model that predicts how likely it is that a piece of text was generated by AI from a variety of sources, such as ChatGPT. | [OpenAI] |

| GPT-2 Output Detector | This is an online demo of the GPT-2 output detector model, based on the ð¤/Transformers implementation of RoBERTa. | [HuggingFace] |

| Openai Detector | AI classifier for indicating AI-written text (OpenAI Detector Python wrapper) | [GitHub] |

Courses

ð©âð«

- ChatGPT Prompt Engineering for Developers, by deeplearning.ai

- Prompt Engineering for Vision Models by DeepLearning.AI

Tutorials

ð

-

Introduction to Prompt Engineering

-

Beginner's Guide to Generative Language Models

-

Best Practices for Prompt Engineering

-

Complete Guide to Prompt Engineering

-

Technical Aspects of Prompt Engineering

-

Resources for Prompt Engineering

Videos

ð¥

- Advanced ChatGPT Prompt Engineering

- ChatGPT: 5 Prompt Engineering Secrets For Beginners

- CMU Advanced NLP 2022: Prompting

- Prompt Engineering - A new profession ?

- ChatGPT Guide: 10x Your Results with Better Prompts

- Language Models and Prompt Engineering: Systematic Survey of Prompting Methods in NLP

- Prompt Engineering 101: Autocomplete, Zero-shot, One-shot, and Few-shot prompting

Communities

ð¤

How to Contribute

We welcome contributions to this list! In fact, that's the main reason why I created it - to encourage contributions and encourage people to subscribe to changes in order to stay informed about new and exciting developments in the world of Large Language Models(LLMs) & Prompt-Engineering.

Before contributing, please take a moment to review our contribution guidelines. These guidelines will help ensure that your contributions align with our objectives and meet our standards for quality and relevance. Thank you for your interest in contributing to this project!

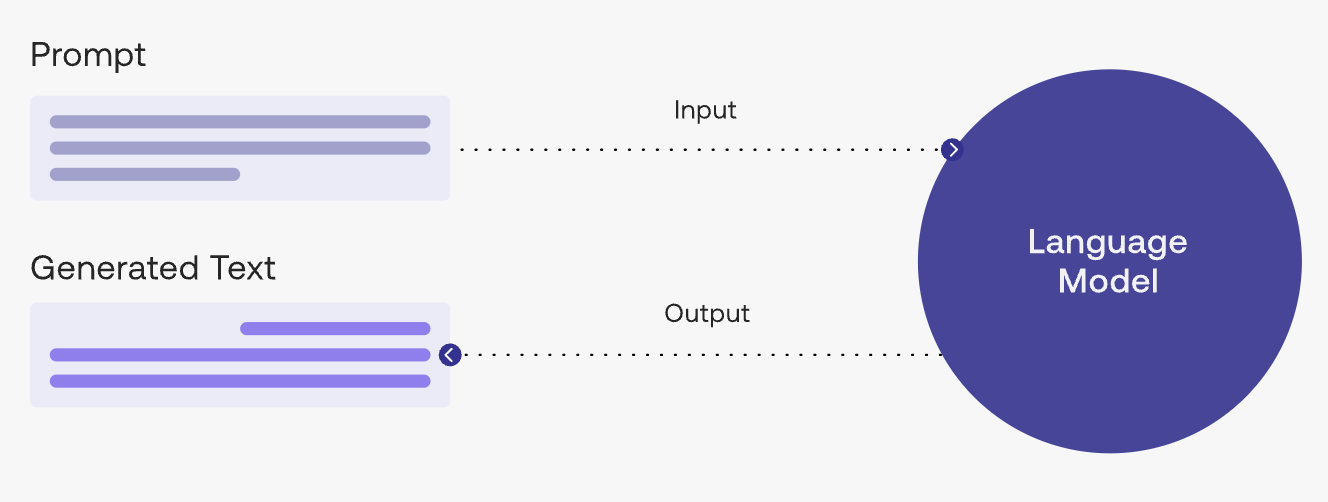

Image Source: docs.cohere.ai

Top Related Projects

🐙 Guides, papers, lecture, notebooks and resources for prompt engineering

A library for helping developers craft prompts for Large Language Models

This repo includes ChatGPT prompt curation to use ChatGPT and other LLM tools better.

Examples and guides for using the OpenAI API

Prompt Engineering, Generative AI, and LLM Guide by Learn Prompting | Join our discord for the largest Prompt Engineering learning community

A curated list of awesome resources, tools, and other shiny things for LLM prompt engineering.

Convert  designs to code with AI

designs to code with AI

Introducing Visual Copilot: A new AI model to turn Figma designs to high quality code using your components.

Try Visual Copilot